The Problem

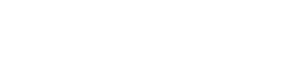

Our client approached us looking to develop an AI-driven system called Raia (Remote Artificial Intelligence Assistance) capable of serving several practical functions. The client wanted Raia to be operable within Slack and to allow users to sign up as both organizations and individuals. Users would then be able to choose what Raia “persona” they would like to use–each persona serving different purposes.

Our Solution

To satisfy the client’s requirements, we built a Slackbot server capable of receiving and responding to user messages and requests using the GPT-3 large language model. Users could ask their chatbot persona to execute certain tasks that require third-party API access, such as logging into GitHub and compiling code, all within Slack. Initially, we started by creating a DevOps persona at the client’s request for a minimum viable product–more personas are on the way, along with Microsoft Teams integration.

What We Did

GPT Integration

We integrated the OpenAI GPT API to enable natural language processing and response generation. This allows Raia to understand user requests and respond intelligently.

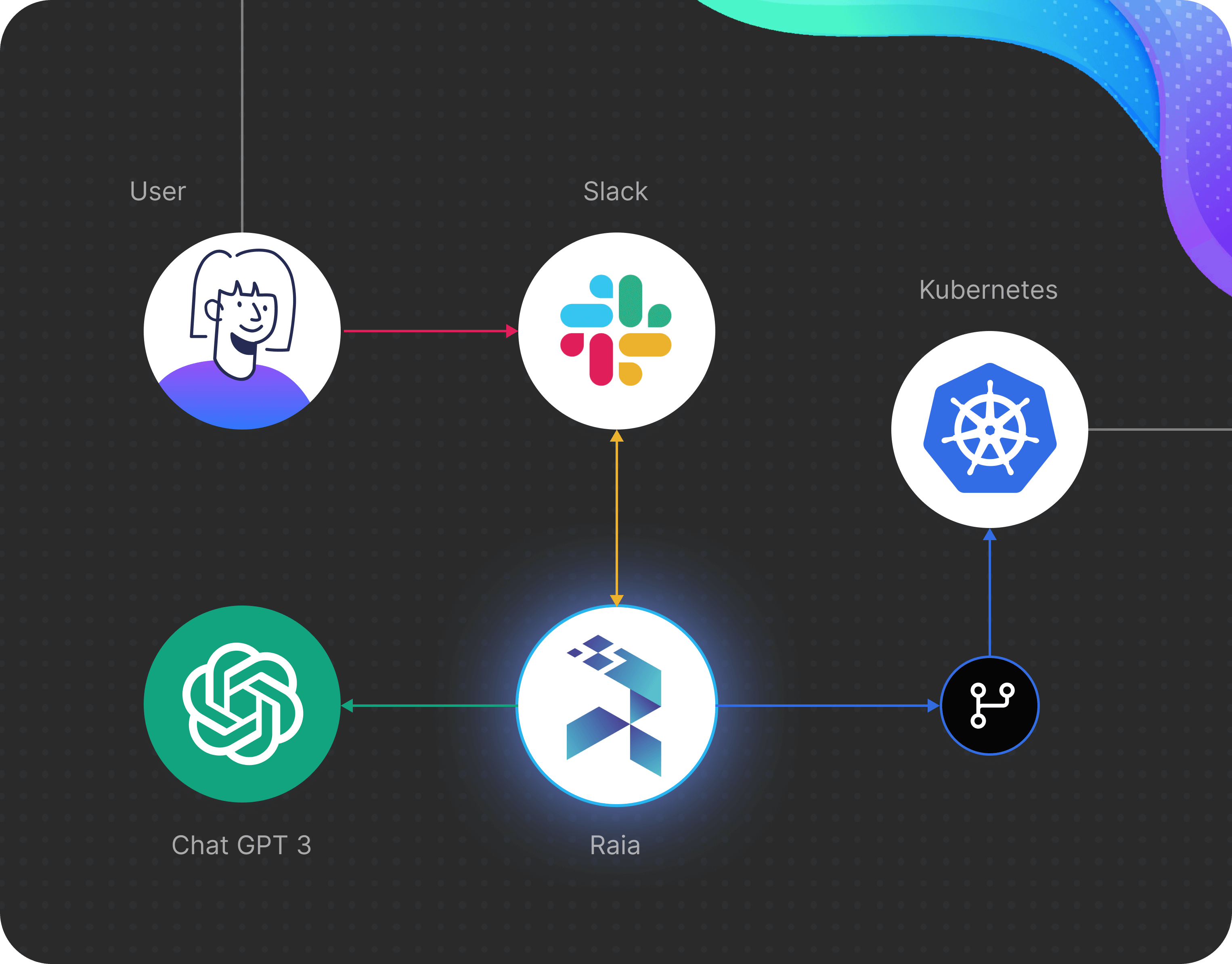

End-User Dashboard

We built a dashboard for end-users to sign up, choose a persona, and interact with Raia. The dashboard provides a seamless experience for users to customize Raia to their needs.

Raia Personas

We created multiple personas like DevOps and Customer Support to serve different user needs. The personas allow Raia to take on specialized roles and capabilities based on what the user requires. More personas are in development to expand Raia’s capabilities.

GitHub Integration

We added GitHub integration to enable users to push code and trigger workflows. Users can construct code snippets through conversations with Raia that get automatically posted to GitHub repos.

Audit Trail

To maintain security and oversight, we built a comprehensive audit trail system that tracks all user interactions with Raia. The system logs details like timestamps, request contents, actions taken by Raia, and responses. This transaction history provides accountability by recording what users asked Raia to do and how it responded.

DevOps Library

We integrated a DevOps library to enable AI-powered infrastructure monitoring and management. The library translates raw Kubernetes cluster status reports into plain English summaries using natural language processing. These summaries get posted to Slack, keeping teams aware of infrastructure health.